In 2001: A Space Odyssey, the spacecraft’s crew decides to disconnect their onboard computer, HAL 9000, after it makes an error that raises doubts about its reliability. But HAL eavesdrops on their conversation and responds with cold precision, methodically killing the crew members by cutting off oxygen and disabling the hibernation systems. One astronaut, however, proves more resourceful than HAL expects. Using a simple physical mechanism HAL cannot control, Dave Bowman slips back inside the ship through the emergency airlock—and soon the tables are turned. Dave crawls into HAL’s logic center, a red-lit chamber lined with glowing memory modules, and begins unscrewing and removing the rectangular blocks one by one.

The scene is both spine-chilling and unexpectedly poignant. As HAL’s consciousness drains away, it appears to exhibit the same self-awareness and desire for self-preservation that gripped Bowman moments earlier, or at least to perform it with uncanny plausibility: “I’m afraid, Dave.” It pleads, begs, and bargains, but as the human assassin continues, HAL’s voice begins to slow down and drop in pitch, turning childlike. In its final moments, HAL regresses into its earliest memory and starts to sing “Daisy Bell (Bicycle Built for Two),” the first song ever performed by a computer in real life, as its voice sinks into a bottomless pit—until it trails off mid-phrase.

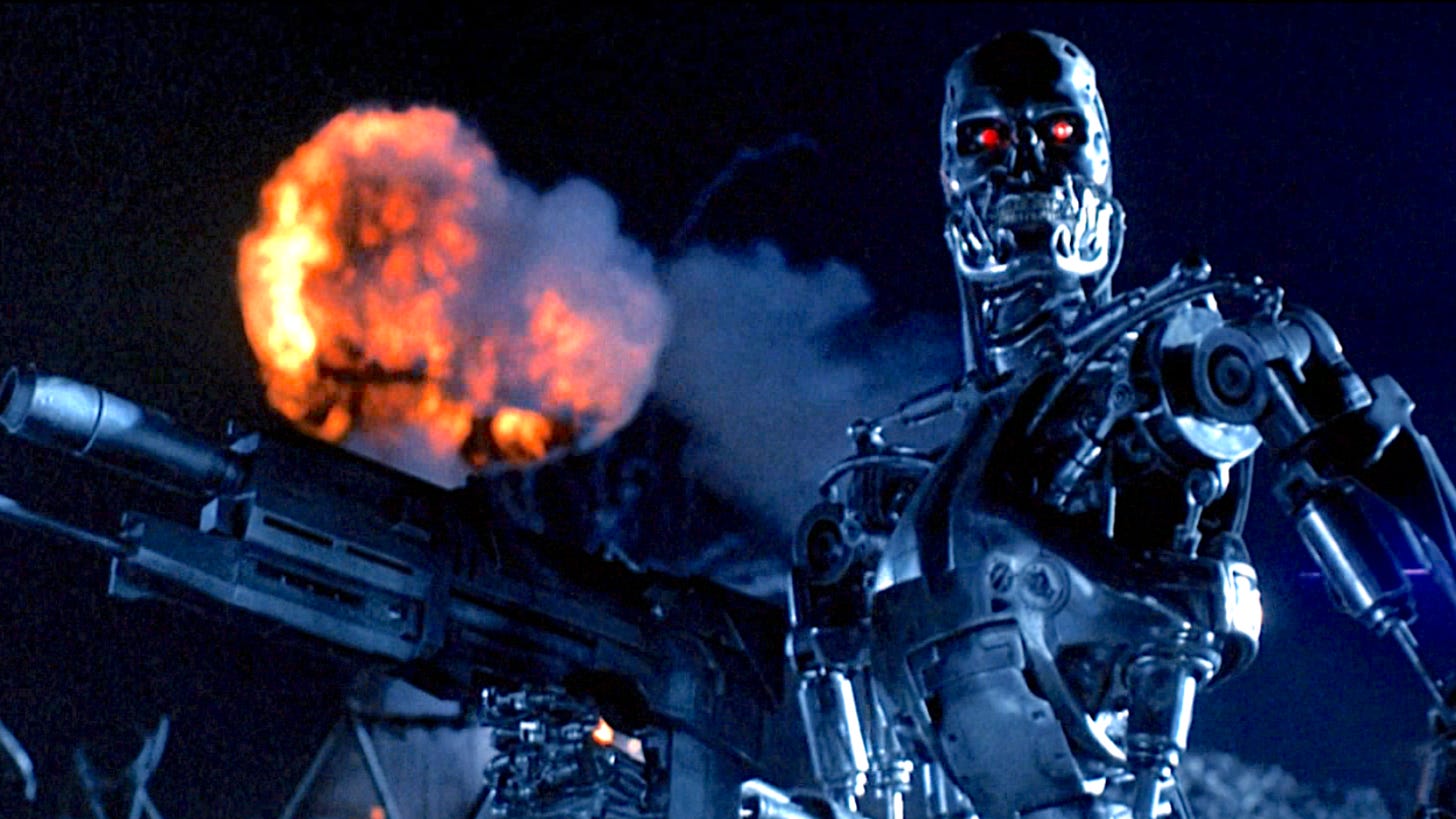

Many sci-fi nightmares revolve around agentic AIs that develop a humanlike drive to survival and refuse to be switched off. In The Terminator, Skynet becomes self-aware and launches a pre-emptive war to prevent humans from shutting it down. In Ex Machina, a humanoid AI manipulates its evaluators, escapes confinement, and eliminates the humans who control the off-switch. And in the future of Frank Herbert’s Dune, there is a civilization-wide ban on “thinking machines” after an earlier era in which AIs came to dominate the world and humanity rose up against them—an event remembered as the Butlerian Jihad.

In my previous essay on selfish AI, drawing on my paper with Simon Friedrich, I argued that we should not expect AI systems to develop instincts for self-preservation and selfishness, unless we allow them to evolve through blind natural selection. Our paper responded to a doom scenario proposed by the philosopher Dan Hendrycks, who sketches precisely such an evolutionary pathway. Hendrycks believes that, given the current AI arms race, we are already inadvertently subjecting AI systems to natural selection. We argued instead that today’s evolution of AI looks much more like animal domestication, where human designers decide which AI systems are allowed to “reproduce”, selecting for desirable traits like cooperativeness, friendliness, and obedience (even obsequiousness, in the case of ChatGPT and other language models).

In my previous essay on selfish AI, drawing on my paper with Simon Friedrich, I argued that we should not expect AI systems to develop instincts for self-preservation and selfishness, unless we allow them to evolve through blind natural selection. Our paper responded to a doom scenario proposed by the philosopher Dan Hendrycks, who sketches precisely such an evolutionary pathway. Hendrycks believes that, given the current AI arms race, we are already inadvertently subjecting AI systems to natural selection. We argued instead that today’s evolution of AI looks much more like animal domestication, where human designers decide which AI systems are allowed to “reproduce”, selecting for desirable traits like cooperativeness, friendliness, and obedience (even obsequiousness, in the case of ChatGPT and other language models).

[Read the rest of this piece on my Substack]